Please Don’t Understand This

Overview

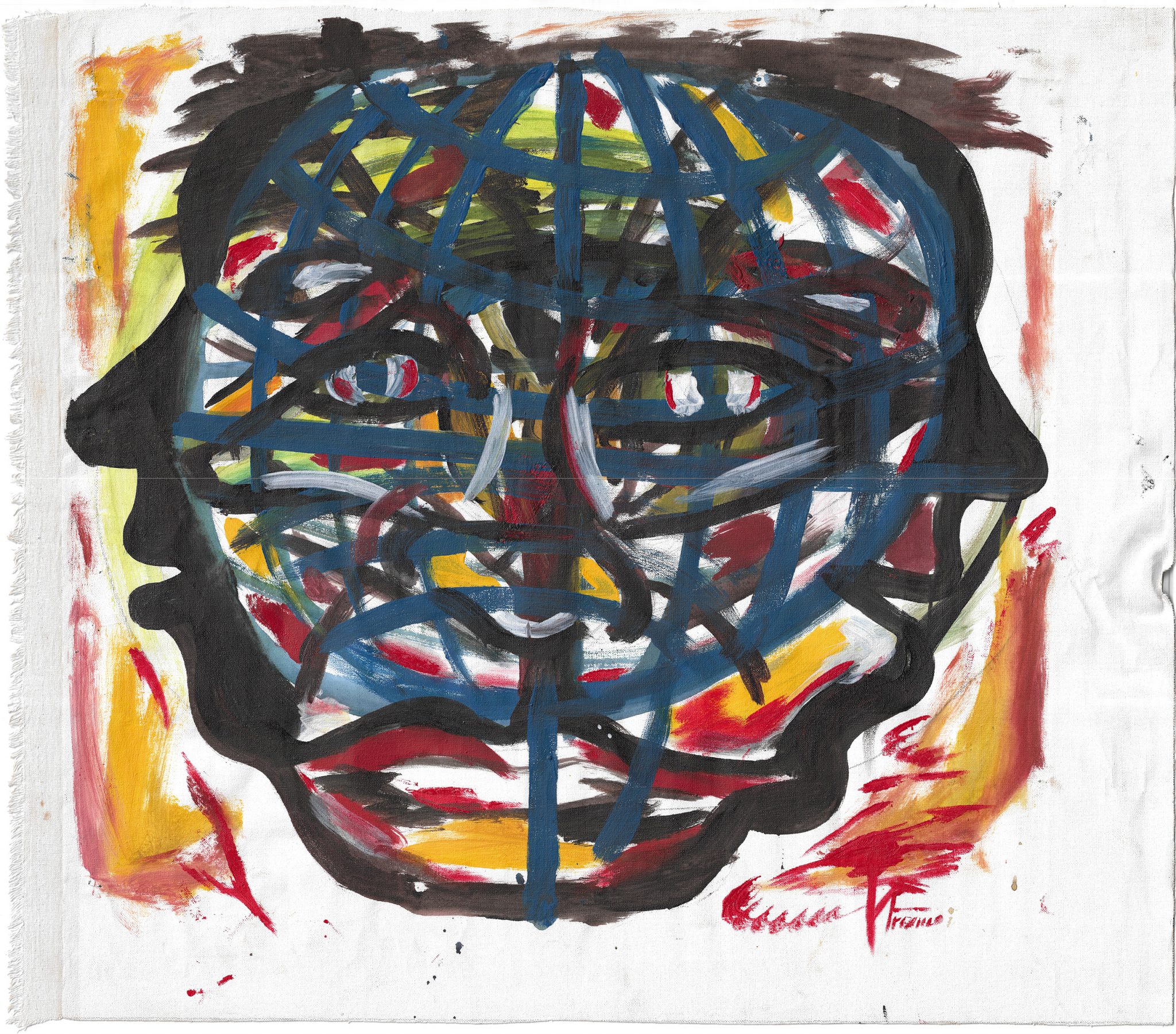

Please Don’t Understand This invited creators in communities closest to the impacts of algorithmic culture to imagine their own visual symbols and languages to share their experiences of living with artificial intelligence and surveillance. Works by artists in Beijing, China; Dzaleka, Malawi; and Cairo, Egypt were then shared and remixed by diasporic Toronto-based creators. The goal was to communicate in ways that confuse those that administer these systems and as an alternative to the abstracted approaches of Western ethical traditions.

See the full outputs of the process at the link below.

Much of the debate about AI, even within the artistic community, has prioritized the exploration of a small number of theoretical, ethical, and ideological principles and consideration of what should underpin policies and public interest in the deployment of these systems. Overwhelmingly, the ethics that emerge on all sides of the debate extend from Western ideologies and traditions.

However, the ultimate objective ought to be effective governance of these extremely powerful tools. Good governance requires meaningful participation from affected communities. Meaningful participation requires literacy to discuss and explore socio-technical systems.

To take ownership of our rituals and our theories transforms the fundamental assumptions on which our categories are based.

The current surge in the development and application of artificial intelligence does not rely on an organizing theory or an underlying reason for why something is true. Algorithmic systems look at enormous caches of data and find the pattern. Explaining the pattern, however, is rarely a priority. Yet an awareness of our ignorance encourages us to pursue new knowledge. Confidence in our models of the world does the opposite.

So, how might we begin to invite others to describe their experiences of algorithmic culture in ways that reflect where they are and that integrate symbolic languages legible and consistent with the communities of which they are a part?

Please Don’t Understand This was a response to this question and saw decentralized residencies in Dzaleka refugee camp in Malawi, a traditional hutong in Beijing, China, and within an Arabic-speaking design community in Cairo, Egypt.

Beginning in the 1880s and up until the Second World War, migrant workers in the United States created and applied their own secret language, placing markings on civil infrastructure like fences and railway sites to aid one another in finding help or avoiding trouble. Usually, these “hobo” signs would be written in chalk or coal.

Communities and subcultures have long developed their own systems — slang, hand signals, visual images—to communicate outside of official systems. In 2021, in collaboration with the Goethe-Institut Toronto and the Canada Council for the Arts, UKAI Projects became curious whether by using local symbolic systems we might enable a fuller picture of technological changes that were underway, providing greater access to conversations about these changes. This project, “Please Don’t Understand This,” draws on theories and rituals to map out new collective sense-making approaches for issues related to artificial intelligence and algorithmic culture.

Malawi is a nation in Southeastern Africa formerly known as Nyasaland. It is bordered by Zambia, Tanzania, and Mozambique. The country is nicknamed “the warm heart of Africa” because of the friendliness of its people. Dzaleka is the only permanent refugee camp in Malawi and has a population of approximately 40,000 refugees and asylum seekers, mainly from the Democratic Republic of Congo (DRC), Rwanda, and Burundi.

Based in Dzaleka, the Tumaini Festival was founded in 2014 and is a large-scale cultural event created and run by refugees in collaboration with the local community. As part of the 2021 edition, we commissioned artists from the refugee camp and the surrounding community to generate their own visual, symbolic languages to represent their fears about, hopes for, and understanding of artificial intelligence.

Concurrently, in Beijing and Cairo, we commissioned similar inquiries. The overall goal was to involve communities most impacted by artificial intelligence, encouraging them to think and talk about the topic. We also asked the artists to consider how they might let people know that taking or posting a picture could create risks for others and how they could let people know that they were being watched without alerting those doing the watching. The work carried out in Malawi, China, and Egypt was then remixed, without offered context, by members of the Central African, Chinese, and Egyptian diaspora living in Canada.

The language and metaphors we use for AI are almost always drawn from Western frameworks. The project assumed that we need many ways to see issues if we hope to generate many ways to respond to them. This contrasts with the dominant view that there can only be one “correct” approach, technically accurate and ethically derived, which is administered and disseminated by experts.

Emergent ritualistic languages, though, become ways of working outside of established, official ideologies. They communicate with metaphors drawn from the communities themselves.

Production Process

Site Selection:

Locations were selected based on 1) proximity to issues centered in AI ethics discourse within Western ideological debates and 2) relationships to local partners willing to disintermediate the commissioning body (UKAI Projects), and 3) willingness to design and deliver the ‘residency’ in a way that felt locally relevant and appropriate. There were several false starts and shifts in this stage. An opportunity to deliver in Palestine was abandoned. A partner in Germany disappeared.

Residency Delivery:

A consistent amount of money (around $5,000 CAD/site) was wired to each location for distribution to artists in a way deemed appropriate to that location. Residencies were delivered in each location. The primary obstacles in this phase were 1) difficulties in wiring money to certain locations due to enhanced restrictions and 2) concerns on the side of local partners that they would be delivering works on a “wrong” understanding of AI. A local challenge arose in Dzaleka as the artistic works were painted, and finding a high-quality scanner proved difficult and time-consuming for the organizers.

Diasporic Responses:

An open invitation was made to creators to join in a workshop led by Monika Bielskyte in Toronto, Canada or online and then to provide their own response to the works created in the three non-Canadian sites. A small honorarium was offered ($150) for each submission. We asked for creators that identified as part of the broader diaspora connected to the initial sites of creation. Eleven responses were received in this way.

Physical Production:

Artist and designer Nour Bishouty took on the task of translating the created works and accompanying texts into a print format appropriate to its content. This print publication uses only mass-produced stationary, including paper, binding, stickers, and so on, appropriate to the regions of production. Moreover, each print edition was hand assembled through improvised assembly practices. Both the buyers and the Toronto-based creators were invited to take part in this manufacturing process. A meal was shared as a component of this stage.

This project is generously supported by